The value of a Definition of Done

We’ve recently established a “definition of done” (DoD) in one of our development teams. In this article, we’re going to talk about:

We’ve recently established a “definition of done” (DoD) in one of our development teams. In this article, we’re going to talk about:

- Why we did it

- How we did it

- What the results were

Let’s go ➡️

Objectives: Why did we do it?

Taken literally, the value of a definition of done is to have a common agreement on when a feature is ready to be shipped, or when it can be considered “finished”. But this is not exactly why we did it, we didn’t exactly have this issue. What we had was a misalignment on how much effort to put into work that comes in addition to the feature itself, for example, monitoring, documentation, and fixing bugs that come after the initial delivery.

In a way, we were not satisfied with an implicit and minimalist “coded, tested, shipped” definition of done.

In addition, and more important than the result itself, the process was crucial to us: taking a moment all together to take a step back, to listen to everyone’s opinion, and to iterate together towards a more efficient team.

Process and tool: how did we do it?

As with software development, an iterative mindset is helpful: start with a draft, and keep adjusting it. Once a draft is drawn, have a retrospective on this definition of done after a couple of sprints. Here’s how our workshop went:

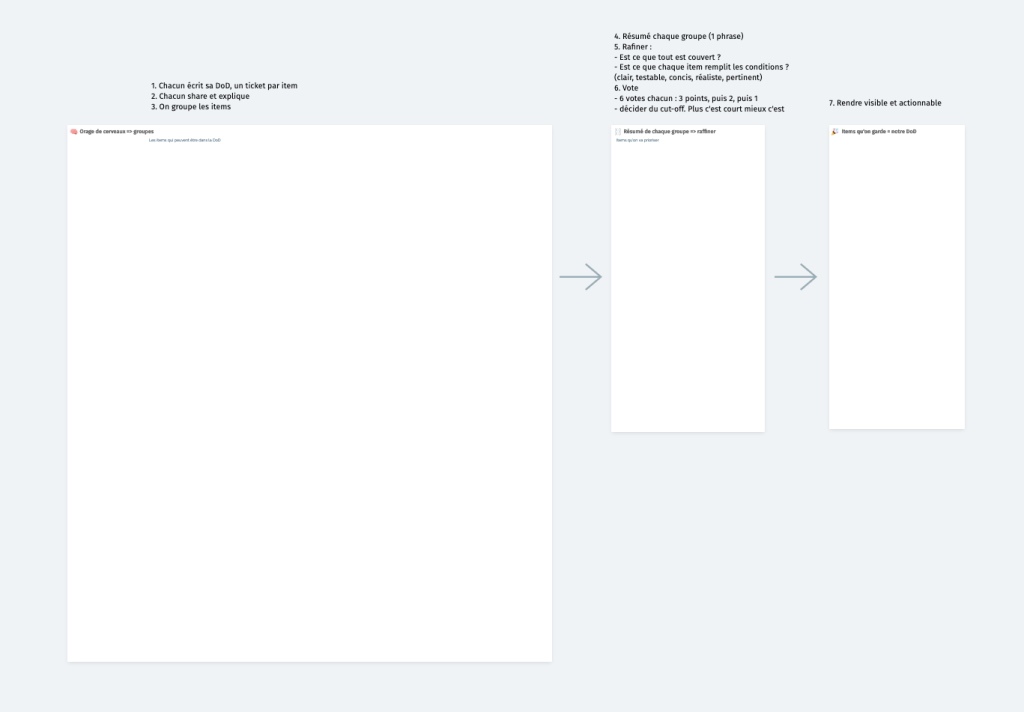

Start with explaining the process and the benefits you’re expecting from the workshop. And here’s the process:

- Individual and silent brainstorm, in which each participant writes their definition of done, one item on an individual note. Optionally, the organizer can prompt the team with some subjects to illustrate the expectations of this phase and broaden everyone’s perspective

- Each participant takes a turn shares and explains their notes

- Group the notes

- Sum up each group with a sentence. This sentence will be an element in the definition of done

- Refine these sentences, ensure that put together they cover everything and that each sentence

meets the following criteria:

- The item is clear of any ambiguity

- The item is testable and can be either met or not, with no grey zone

- The item is concise

- The item is realistic, only put actions you will actually do and merely not dream of

- The item relevant, this is where the jokes get left behind 🙂

- Take a vote. Each participant has 6 votes to spread on 3 items in the following manner: 3 votes on the favorite item, 2 votes on the next, 1 vote on the last. Decide where you draw the line in the number of items to keep. Between 3 and 5 is a good idea.

- You have your definition of done! Now make it visible and actionable. How to do it is another decision you will have to make as a team

We’ve used Metro Retro, a tool we know and love, the board split into 3 boxes:

- The first box for the brainstorms and groups

- The second where new notes are created for the summaries of the groups

- The third where the selected items are moved to

Results

For a team of 7, we planned 1.5h. It took us 2 and we didn’t have time left to discuss how to make it actionable! We recommend allocating at least 2h and considering splitting it into two sessions.

The feedback of the team members was mostly positive but with some doubts about whether it will be used and will change anything. Indeed, making it actionable is key, and we decided to simply require the fulfillment of the DoD to mark a feature as “delivered” in the table listing the company’s ongoing projects.

The objective described at the beginning has been met. We reached a common agreement on the deliverables accompanying the feature itself, how to identify whether these deliverables have been done. In addition, the resulting DoD has “forced” us to make an effort on the monitoring and documentation, although it took some discipline. Both the process itself and the result of the process have proven valuable.

A parting gift

To end this article on a concrete note, here’s our definition of done:

- A dashboard measures the impact of the feature

- The monitoring shows no major error for a period set in advance

- A test plan has been written, it covers the main and edge cases. It has been executed on the feature

- Automated tests have been written and the coverage satisfies the team

- Technical and functional documentation has been written